The post-truth moment we live in is defined less by outright lies than by attacks on objectivity. While audiences sanctify subjective experience, they treat “objective truth” as naïve, biased, or culturally contingent. But there’s a paradox: audiences generally reward emotional narratives, but they tend to punish perceived overreach—“Your truth is poignant, but it’s not mine; don’t impose it on me.”

For human rights reporting, this paradox is a conundrum. We seek the truth but perform certainty—and there’s tension between these two attitudes. In the pursuit of certainty, moral criteria sometimes trump accuracy. Claims that exceed what factual evidence can support may win short-term attention, but they’re fragile. Opponents will exploit any weakness to discredit reports and to spread the idea that human rights are unreliable.

What’s to be done? Fortunately, there is a middle way between doubling down on certainty and toning down advocacy. Transparent calibration—a clear system of “confidence levels” for claims—allows for the communication of uncertainty without loss of clarity. And it’s been successfully tested in other fields.

Confidence-level reporting: a proven tool

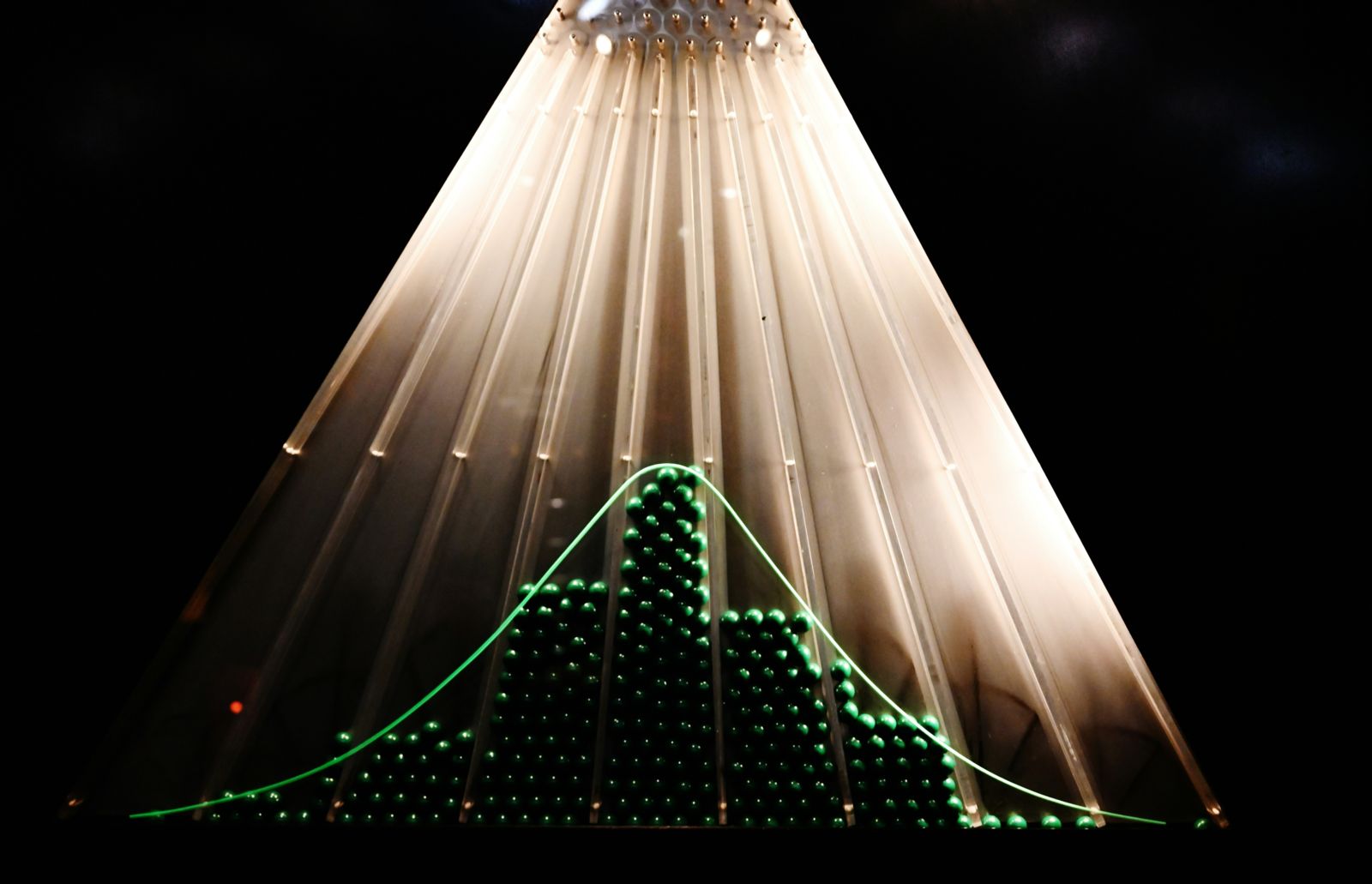

The problem is how to make intelligible statements while admitting that evidence is partial. Actors in other ecosystems—statistics, climatology, public health—have devised methodologies to address this problem and express uncertainty through confidence levels.

For political pollsters, confidence reporting is a routine process: state your assessment, show the basis for it, and indicate margins of error. Climate science offers another mature model. The IPCC, for instance, consistently communicates uncertainty by separating the quality of available evidence from the probability of outcomes. Uncertainty is embedded in public health and pharmaceutical research protocols and directly linked to the field’s legitimacy. Even professionals outside “hard” science have devised ways of reconciling uncertainty and clarity. Journalism, for instance, treats correction as an integrity norm, complete with clear policies, visible edits, and retractions. This visible accountability culture is a credibility asset for “mainstream” journalism—and is notably absent from social media. All these ecosystems differ, but they acknowledge that while evidence can be messy, credibility is key. Confidence language preserves credibility even when certainty is out of reach.

Human rights reporting, by contrast, rarely acknowledges uncertainty. It lacks consistent ways to admit when evidence is partial and to explain the implications of this partiality. The fear that opponents and abusers will weaponize any admission of error or inaccuracy is legitimate, but downplaying uncertainty doesn’t protect human rights advocates from bad-faith attacks. It makes them more vulnerable. When reports sound overconfident or impossibly definitive, critics don’t just contest one claim—they attack the messengers and call the whole human rights endeavor into question.

Confidence language is a defense, but it’s not defensive. It’s a way of saying: “Here’s what we know, how we know it, and how sure we are.”

A basic confidence ladder for human rights reports

What human rights advocates need isn’t a statistical treatise; it’s a basic confidence ladder: simple to use and legible to the public but hard to criticize. A workable start could involve the following levels: “High confidence” (consistent patterns; multiple independent sources corroborated by official records, forensics, or open-source materials); “Moderate confidence” (credible testimonies, partial corroboration; patterns that aren’t completely clear); “Needs more research” (plausible prima facie allegations that are not yet sufficiently corroborated).

How would this look in practice? For an incident-level claim: “We assess with moderate confidence that extrajudicial executions took place at Site X, based on witness accounts; forensic review and physical access are needed.” For a pattern-level claim (where inferences about frequency, spread and intent raise the risk of overreach): “We have high confidence that acts of torture increased in District Z, based on interviews with 100 surviving victims. We have moderate confidence that this reflects a deliberate policy rather than isolated practice; further investigations are needed.”

The risk is sounding technocratic. And obviously, this is only applicable to reports. It isn’t practical for press releases, dispatches, and other shorter products that need quick media response, and it isn’t necessary when the evidence is absolutely compelling (paralleling the “beyond reasonable doubt” standard in criminal justice).

A basic confidence ladder structure, complemented with appropriate rules of use, has the potential to build trust and formalize credibility at a level close to another standard—“reasonable grounds to believe.” Minimum rules of use could include stating the evidence (what sources? gathered how?), naming possible alternatives or competing explanations (with their own confidence levels, if possible), and being explicit about constraints, limitations, and what’s needed to beef up the findings.

The payoffs: rigor and advocacy leverage

The immediate payoff is obvious: avoiding overreach. It has rhetorical value. But there are deeper payoffs.

First, a basic confidence ladder gives us a disciplined way to communicate uncertainty. Done well, it conveys rigor, not weakness, and it acknowledges that intellectual honesty means recognizing that some conclusions are firmer than others. It also has the potential to reduce polarization by forcing critics (who will not disappear) to shift the debate from “you made this up” to “your confidence level is too high (or too low)” Such an argument over methods is much healthier terrain than insinuations of bias or dishonesty.

A basic confidence ladder would also strengthen the assessment of cause and effect. Human rights issues often involve compound variables (political will, institutional capacity, structural inequality, personal incentives), and the ladder enables an honest means of dealing with complexity instead of pretending it doesn’t exist. A culture of self-correction could be grounded in errata sections, dated evidence updates, or even “What would change this assessment” notes embedded in reports.

Confidence reporting also has strategic value. “Needs more research” isn’t a weakness or retreat; it’s an argument for action. Advocates could deploy it to push authorities to open investigations, grant access to NGOs or UN bodies, protect victims and witnesses, or preserve evidence. Advocates could use a “needs more research” assessment to make the case for inquiry into and access to serious and plausible allegations.

Last, there’s a benefit to norms within the field. Confidence reporting is a shield against both accusations of ideological bias and the excesses of subjectivity. It doesn’t dismiss lived experience but recognizes that testimony, like any evidence, must be verified. That combination—moral commitment and methodological discipline—makes the human rights movement harder to attack.

Transparent calibration is a way of making claims easier to scrutinize but harder to dismiss. It positions truth-telling, rather than narrative certainty, as the foundation of the human rights project. In a post-truth era, that kind of disciplined honesty is a strength.